Large language models are reshaping how users research vendors, compare options, and validate decisions before ever visiting a website. While traffic from AI tools such as ChatGPT, Claude, or Perplexity remains a small percentage of total sessions for most organizations, the behavior of these users often looks different once they arrive.

Marketing teams are beginning to notice patterns that do not align with traditional organic search assumptions. Some users land on deeper pages instead of homepages. Others convert within a single session after minimal navigation. In many cases, conversion paths are shorter and more decisive.

These shifts create measurement challenges. Analytics platforms are built around channel attribution models that assume discovery happens through a visible referrer. Large language models disrupt that assumption. Influence often occurs before the click, inside the AI conversation itself.

Understanding how AI influenced discovery differs from traditional organic search is critical for interpreting performance trends and explaining them internally.

How Traditional Organic Search Shapes User Behavior

Organic search historically follows a recognizable pattern. A user enters a query into Google or another search engine, scans results, and clicks a link. The visit is recorded with clear referral data, and analytics platforms categorize the session as organic traffic.

User journeys from traditional search often involve multiple steps. Visitors may land on an informational blog post, browse related resources, compare service pages, and return later through another channel before converting. The funnel is visible. Each interaction is timestamped and attributed.

This model supports standard SEO reporting. Teams measure rankings, impressions, sessions, engagement metrics, assisted conversions, and revenue. Even when attribution is imperfect, the referral source provides directional clarity.

Large language models do not follow the same path.

How Large Language Models Influence Pre Site Decisions

When users interact with large language models, they often receive synthesized answers instead of a list of links. The AI may summarize vendor capabilities, compare alternatives, explain tradeoffs, and highlight differentiators within the chat interface.

By the time a user clicks through to a website, much of the evaluation work has already happened. They may arrive with narrowed options and a clearer understanding of fit. This reduces exploratory browsing behavior.

Instead of reading multiple top of funnel blog posts, AI influenced users frequently land directly on product, pricing, or solution pages. They may submit a form, request a demo, or initiate contact quickly because their research occurred upstream.

From an analytics perspective, the visit looks deceptively simple. A direct session. A short time to conversion. Minimal pageviews. Without context, teams may misinterpret this as brand familiarity, paid retargeting success, or pure direct traffic growth.

In reality, the decision acceleration happened inside the language model.

Why AI Influenced Traffic Is Difficult to Measure

Most analytics platforms rely on referrer data and UTM parameters to categorize sessions. Large language models often do not pass clear referral signals. Traffic may appear as direct, unassigned, or categorized inconsistently.

Even when a tool does pass a referrer, reporting standards are still evolving. Many organizations lack dedicated channel groupings for AI sources. Sessions become buried inside broader buckets.

The deeper issue is structural. Analytics tools measure on site behavior. They do not capture conversations that occur before a user clicks. If a prospect spends twenty minutes asking an AI tool about your competitors, pricing models, and implementation requirements, that influence remains invisible.

This creates tension with leadership teams. Organic traffic may remain flat or decline, yet conversion rates improve. Sales cycles shorten for certain cohorts. Marketing leaders are asked to explain what changed.

Without visibility into AI influenced discovery, the explanation becomes speculative.

Recognizing Behavioral Signals of AI Influenced Users

Although direct attribution is limited, certain behavioral patterns can indicate AI influenced discovery.

First, monitor landing page distribution. An increase in first session visits to bottom of funnel pages can signal upstream evaluation. If more users enter through pricing or solution comparison pages, they may have completed research elsewhere.

Second, analyze time to conversion. Users who convert in one session after landing on a high intent page may have arrived pre qualified. Compare this cohort to traditional organic visitors who require multiple touchpoints.

Third, review assisted conversion paths. If certain conversions lack expected top of funnel interactions, it may indicate that early education occurred off site.

Finally, look at qualitative inputs. Sales teams may report that prospects reference AI tools directly during calls. Support teams may notice users repeating phrasing that mirrors language model outputs.

These signals do not provide perfect attribution, but they offer directional insight.

Rethinking Conversion Measurement in an AI Discovery Environment

If large language models compress evaluation cycles, traditional funnel assumptions need adjustment. The classic awareness to consideration to decision model assumed visible interactions across channels. AI introduces a hidden stage.

Marketing teams should expand reporting frameworks to account for influenced behavior rather than strictly sourced traffic. Instead of asking only where a user came from, consider how ready they were upon arrival.

Segment users by entry depth, number of sessions to conversion, and interaction complexity. Compare cohorts that convert quickly against those that follow longer research journeys.

Align internal teams around intent signals rather than channel labels. High intent landings combined with short engagement paths may indicate successful visibility inside AI systems even if traffic volume appears modest. Organizations that adapt their measurement mindset to account for pre site influence will be better positioned to interpret performance shifts and make informed strategic decisions.

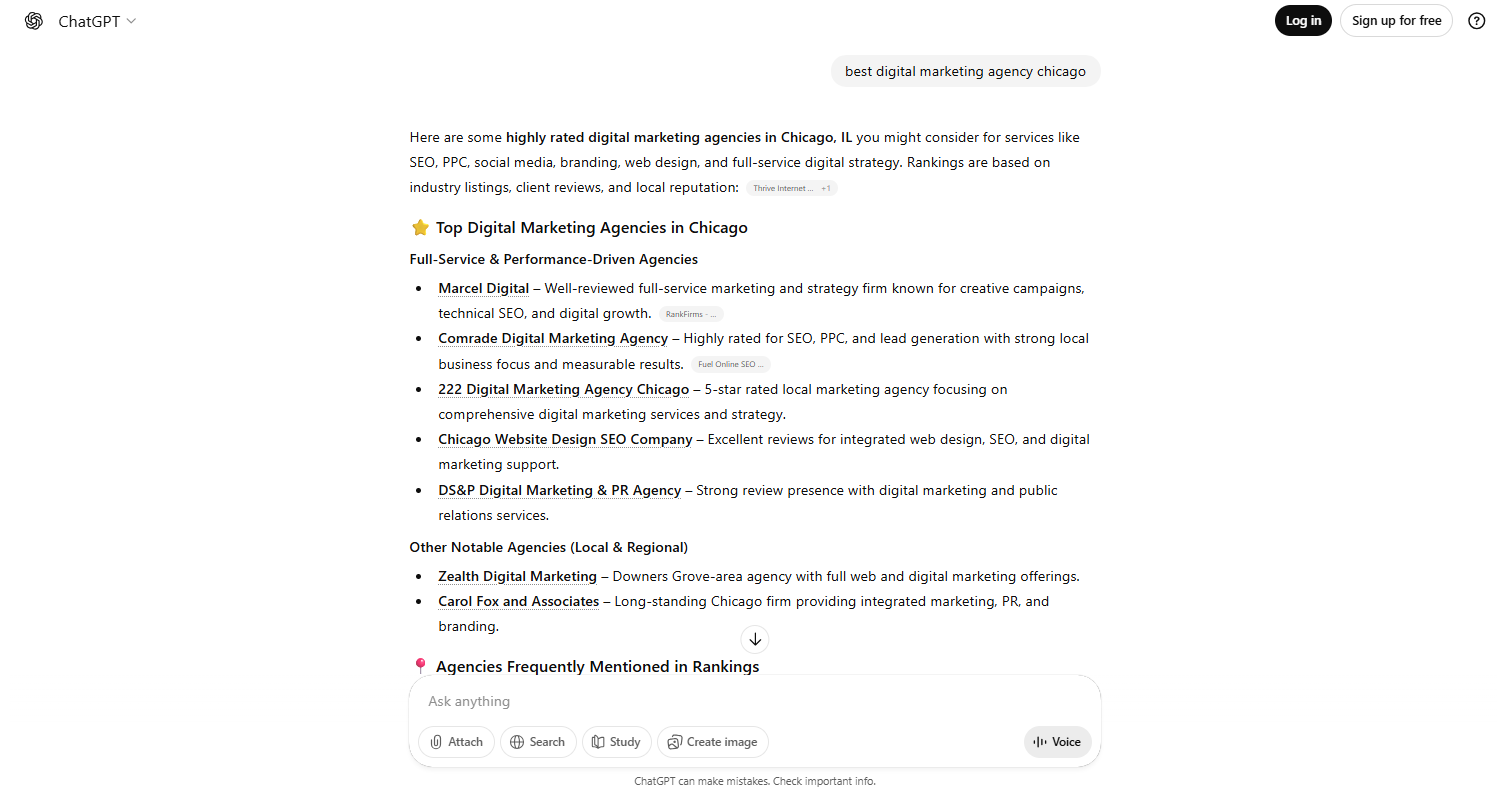

How Marcel Digital Helps Measure AI Influenced Discovery

As large language models continue shaping how users research and evaluate solutions, organizations need clarity around performance shifts and conversion efficiency. Marcel Digital works at the intersection of SEO, Answer Engine Optimization, analytics, and conversion strategy to help teams interpret these changes with confidence.

Our team evaluates how AI visibility may be influencing landing behavior, conversion timing, and intent signals. We develop reporting frameworks that go beyond surface channel attribution and incorporate cohort analysis, entry depth segmentation, and assisted conversion insights. By aligning search strategy with analytics infrastructure, we help organizations connect discovery signals to measurable business outcomes.

If your team is seeing changes in conversion speed, landing page distribution, or unexplained direct traffic growth, it may be time to reassess how AI influenced discovery is impacting your funnel. Contact us today to learn how Marcel Digital can help you measure, interpret, and optimize performance in an evolving search environment.

Frequently Asked Questions

Organic search typically sends users to a website through visible search engine results with clear referral data. Large language model influenced discovery often occurs inside an AI interface where research, comparison, and evaluation happen before the user clicks through to a site.

Large language models summarize information and answer detailed questions before a visit occurs. As a result, users may arrive with clearer intent, land on deeper pages, and take action more quickly than traditional organic visitors.

Most analytics platforms rely on referral data and on site interactions to categorize traffic. Large language models often do not pass consistent referrer information, and conversations that shape user decisions happen outside the website, making influence difficult to capture directly.

Indicators can include first visits to high intent pages, shorter engagement paths, fewer exploratory pageviews, and faster decision timelines. These patterns suggest that evaluation occurred prior to the site session.

Organizations should focus less on strict channel labels and more on intent signals, entry depth, and engagement patterns. Recognizing that part of the decision process now occurs before the site visit helps teams interpret performance shifts more accurately.