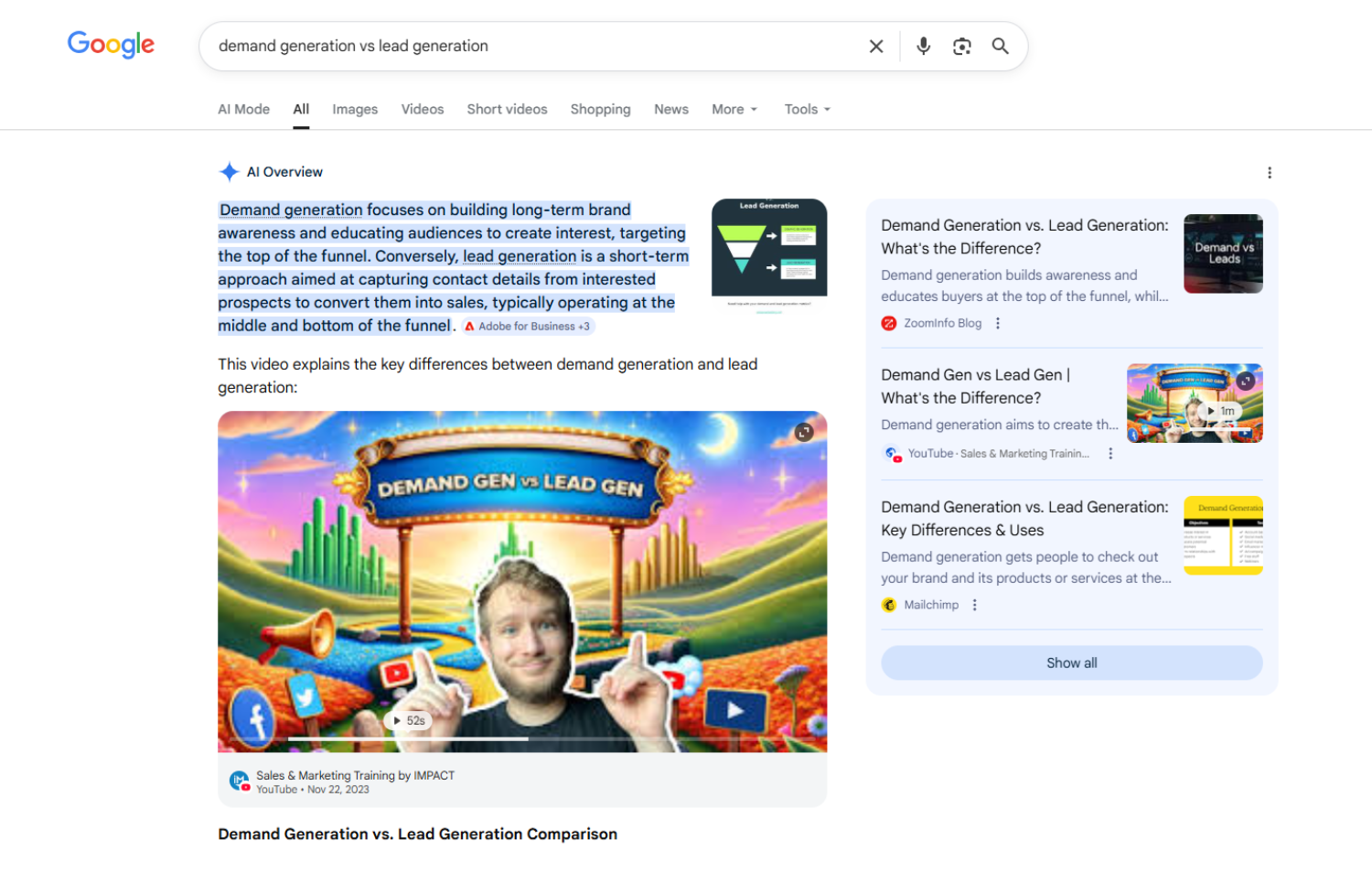

AI driven search experiences are no longer limited to text results. AI overviews increasingly blend written summaries, images, product visuals, diagrams, and video clips into a single synthesized response. Instead of presenting ten blue links, search engines now assemble answers that combine multiple content formats into one cohesive experience.

This shift changes how users evaluate brands and how visibility is earned. Traditional text first optimization strategies are no longer sufficient on their own. Organizations that rely heavily on blog content without a structured visual and video strategy risk losing ground in blended discovery environments.

Multimodal search is not a future concept. It is already influencing how content surfaces in AI overviews and generative search experiences. The organizations that adapt their planning and governance structures will gain disproportionate visibility.

How Multimodal Search Is Reshaping AI Driven Visibility

AI overviews analyze content across formats. When responding to a query, generative systems may pull from written explanations, supporting diagrams, embedded video segments, product images, or comparison charts. These formats are synthesized into a unified result designed to answer the user’s question quickly and visually.

In this environment, content is evaluated not only for textual clarity but for visual reinforcement and media richness. Pages that include optimized images, structured video, and descriptive metadata provide more signals for AI systems to interpret.

Users also expect richer experiences. When researching a product, service, or complex concept, many users prefer to see demonstrations, charts, and real examples rather than reading long passages of text. Search engines are responding by elevating assets that support visual comprehension.

Text remains foundational, but it now competes alongside multimedia assets for prominence in AI generated results.

The Risk of Over Reliance on Text Based Content

Many organizations built their organic strategy around long form written content. Blog articles, landing pages, and resource hubs became the primary tools for earning rankings. While that approach remains important, it does not address the full scope of multimodal discovery.

When AI overviews integrate visuals and video directly into results, text only strategies lose competitive advantage. A comprehensive written explanation may be outranked by a page that includes diagrams, short video explainers, and structured image metadata.

Over reliance on text creates additional blind spots. Creative and production teams may treat visual assets as secondary enhancements rather than core discovery drivers. As a result, image and video assets are often created without structured optimization, governance standards, or alignment to search intent.

This creates a structural disadvantage in environments where multimedia signals influence visibility.

Unclear Ownership Across Teams Slows Adaptation

One of the most common barriers to multimodal readiness is organizational structure. SEO teams traditionally focus on keyword research, on page optimization, and technical implementation. Creative teams manage design systems and brand assets. Video production often sits within separate content or social teams.

In a multimodal search landscape, these functions cannot operate independently.

If image alt text, file naming conventions, and structured metadata are not standardized, search systems struggle to interpret media assets accurately. If video content is produced without alignment to search intent or structured markup, it becomes difficult to surface in AI overviews.

Ownership ambiguity leads to inconsistent governance. Some teams prioritize storytelling. Others prioritize optimization. Without a unified strategy, media assets may look polished but fail to contribute meaningfully to discovery.

Organizations that clarify accountability and integrate search considerations into visual production workflows will adapt more effectively.

Connecting Visual Content to Measurable Business Outcomes

Another challenge lies in measurement. Many teams are under pressure to invest more heavily in video and visual assets, yet struggle to tie those efforts to revenue impact.

Traditional analytics frameworks focus on sessions, conversions, and lead generation from landing pages. Visual and video assets often lack clear attribution paths. Engagement metrics such as views or watch time do not always connect directly to pipeline creation.

To compete in multimodal search, measurement models must evolve. Media assets should be integrated into broader performance tracking frameworks that connect discovery, engagement depth, assisted conversions, and influenced revenue.

When visual content is treated as a brand awareness tool rather than a measurable growth asset, it becomes difficult to justify sustained investment. Multimodal optimization requires governance structures that align creative production with business outcomes.

Metadata and Governance Are Competitive Advantages

Multimodal search rewards structured data and consistent metadata. Image alt attributes, descriptive file names, schema markup, and video transcripts provide interpretive context for AI systems.

Many organizations publish images and videos without standardized naming conventions or structured descriptions. Media libraries become fragmented across content management systems, digital asset management tools, and social platforms.

Inconsistent governance reduces discoverability. AI systems rely on contextual signals to determine relevance. When metadata is incomplete or misaligned with search intent, visibility suffers.

Establishing clear standards for media optimization is not a cosmetic exercise. It is a structural requirement for competing in AI blended discovery environments.

Rethinking Content Planning for a Blended Discovery Model

Content planning must now account for format diversity from the outset. Instead of asking what blog topic to publish next, teams should ask how a topic can be expressed across text, image, and video formats.

A single concept might include a written explanation, a short explainer video, supporting diagrams, and visual summaries designed for reuse across platforms. Each format reinforces the other and provides additional signals for AI systems to interpret.

This approach requires coordination across SEO, content strategy, design, and production teams. Editorial calendars should integrate visual planning and dynamic ad creative strategies rather than treating multimedia assets as optional add ons.

Organizations that treat images and video as primary discovery assets rather than supplementary enhancements will gain stronger visibility in AI overviews.

Structural Readiness Determines Competitive Advantage

Many organizations are not structurally prepared for multimodal search. They lack centralized governance for media assets, unified reporting frameworks, and cross functional alignment between SEO and creative teams.

Adapting to AI driven discovery does not require abandoning text strategy. It requires expanding it. Text provides clarity and context. Visual and video assets enhance comprehension and increase surface area for AI integration.

Structural readiness means establishing clear ownership of media optimization, aligning production workflows with search intent, and connecting multimedia performance to business outcomes.

As AI overviews continue blending formats into synthesized results, organizations that operate within siloed content models will struggle to compete.

How Marcel Digital Helps Organizations Compete in Multimodal Search

Marcel Digital helps organizations adapt to evolving AI driven discovery environments by aligning SEO, content strategy, and media governance within a unified framework. This includes a dedicated focus on Answer Engine Optimization (AEO), ensuring content is structured not just to rank, but to be surfaced directly within AI generated answers and overviews.

Our team evaluates how text, image, and video assets contribute to organic visibility and AI overview inclusion. We identify gaps in metadata, structured markup, and media optimization processes. From there, we establish governance standards that ensure visual and video assets are created with search intent and measurable performance in mind.

We also connect multimodal content efforts to analytics frameworks that track engagement depth, assisted conversions, and pipeline influence. This allows organizations to move beyond surface engagement metrics and understand how blended discovery contributes to revenue growth.

As AI overviews increasingly integrate visual and video elements into search results, multimodal readiness becomes a structural advantage rather than a creative preference.

If your organization is investing in content but relying primarily on text based strategies, now is the time to evaluate how images and video are positioned within your discovery framework.

Contact Marcel Digital today to learn how a multimodal search strategy can strengthen visibility, improve attribution clarity, and align your content ecosystem with measurable business growth.

Frequently Asked Questions

Multimodal search refers to AI driven search experiences that combine text, images, video, and other media formats into a single synthesized result. Instead of presenting only written links, AI systems assemble answers using multiple content types to improve clarity and context.

AI overviews analyze written content alongside supporting visuals, diagrams, and video segments. Media assets that include structured metadata and align clearly with search intent are more likely to be incorporated into blended search responses.

Search engines increasingly evaluate visual and video assets as part of relevance and visibility signals. Organizations that rely exclusively on written content may lose visibility to competitors that reinforce their content with optimized images and video.

Businesses should implement descriptive file naming, accurate alt attributes, structured schema markup, video transcripts, and consistent metadata governance. Media assets should align with search intent and be planned as part of a broader content strategy.

Multimodal performance should be evaluated using engagement depth, assisted conversions, and influenced pipeline metrics within broader reporting frameworks. Connecting media engagement to measurable outcomes helps clarify how blended discovery contributes to business growth.